Recommended Blogs

Securing Generative AI: Building Responsible, Enterprise-Ready Applications

Table of Content

Generative AI is revolutionizing enterprise operations, opening unprecedented opportunities for innovation, efficiency, and competitive advantage. However, the question that is paramount to enterprise leaders is how to ensure these AI systems are safe and are in line with their business values.

Yet, with great power comes great responsibility. Enterprises adopting Generative AI must grapple with critical security, privacy, and ethical challenges. Without robust safeguards, sensitive data can be exposed, regulatory compliance may be breached, and customer trust can erode, jeopardizing both reputation and long-term business success.

For organizations aiming to leverage AI at scale, building responsible, enterprise-ready applications is no longer optional, it’s imperative. This blog explores the key practices, frameworks, tools, and strategies that enterprise leaders can implement to secure their Generative AI applications, mitigate risks and ensure ethical, transparent and trustworthy AI adoption.

Let’s dive in the approaches with which enterprises can future-proof their AI initiatives, accelerate innovation, and maintain the confidence of both customers and stakeholders.

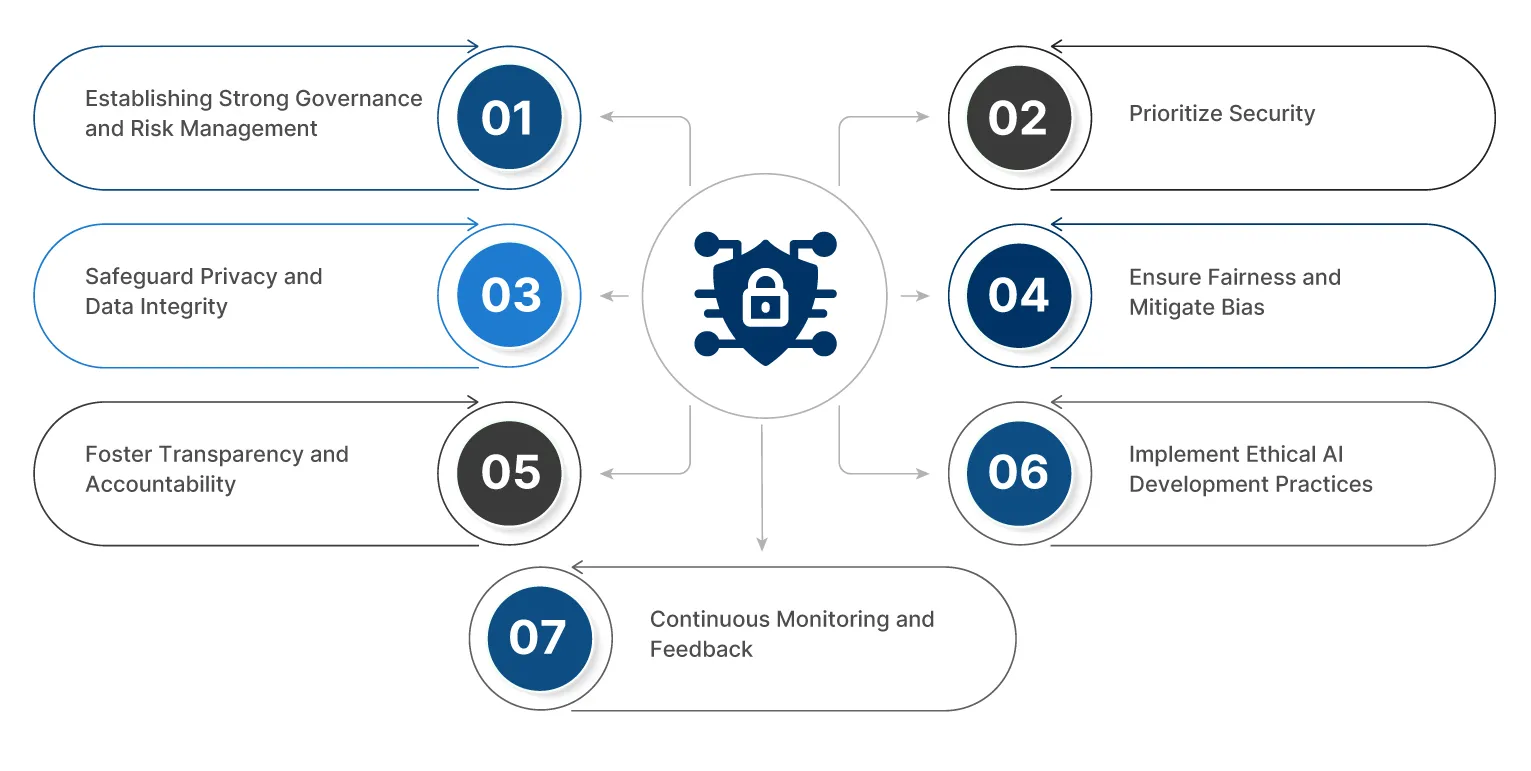

Key Practices for Building Secure and Responsible GenAI Applications

There is no one-size-fits-all way of building secure and responsible Generative AI (GenAI) applications. Every business will have individual needs, though there are basic practices that can be used to assist in the process. These ensure the safety of your AI systems and that they are ethical, gaining goodwill and adopting sustainability in the end.

1. Establishing Strong Governance and Risk Management

Start with lightweight governance that scales. Register use cases, tier them by risk, and require a quick privacy/impact review for medium and high tiers. Keep a living policy and model card so decisions, assumptions, and owners are clear.

2. Prioritize Security

When dealing with AI systems that deal with sensitive information, security cannot be an afterthought. You must ensure that all information within your systems, including the activities done within your systems, is safe and achievable through a zero-trust model, whereby everything must be verified before it is trusted.

3. Safeguard Privacy and Data Integrity

Everything depends on trust regarding AI. Users will leave when they suspect that their data is mishandled. The first step in guaranteeing privacy is anonymization of sensitive information, followed by establishing data protection controls such as data loss prevention (DLP).

4. Ensure Fairness and Mitigate Bias

Artificial Intelligence can be used to unintentionally reinforce existing prejudices within society. Such prejudices have the potential to damage both individuals and organizations. To prevent biased results, you might utilize various training data and perform frequent fairness checks by frequently running them and monitoring the results of your AI to ensure they become inclusive and fair.

5. Foster Transparency and Accountability

AI should never operate as a “black box.” If users can’t understand how or why certain decisions are made, they’re not going to trust the system. Being transparent means documenting how AI makes its decisions and communicating this clearly to users. It’s also about getting input from a broad range of people, like ethics experts, developers, and even end-users, during development. Transparency and accountability should be part of the DNA of your AI system.

6. Implement Ethical AI Development Practices

Ethical concerns should always come first in any AI project. It includes the following industry standards, ensuring your AI doesn’t harm users and prioritizing human well-being. The goal is to create AI systems that benefit society, not just your business. Bring different perspectives, especially from ethicists and experts in diverse fields, so your AI stays aligned with societal values while still meeting your business goals.

7. Continuous Monitoring and Feedback

Building a responsible GenAI system doesn’t stop at launch. It’s an ongoing process that requires continuous monitoring. You’ll need to track how the AI performs, how users interact with it, and how it adapts to changes in the real world. Collecting user feedback and tweaking the system regularly will help ensure it stays secure, effective, and aligned with your goals. AI models should be updated with fresh data and best practices to keep them sharp and relevant.

Best Tools and Frameworks for GenAI Security

The selection of appropriate tools and frameworks is a game-changer when it comes to the creation of secure and responsible GenAI applications. Below are some of the most appropriate frameworks and tools that can deliver that essential support.

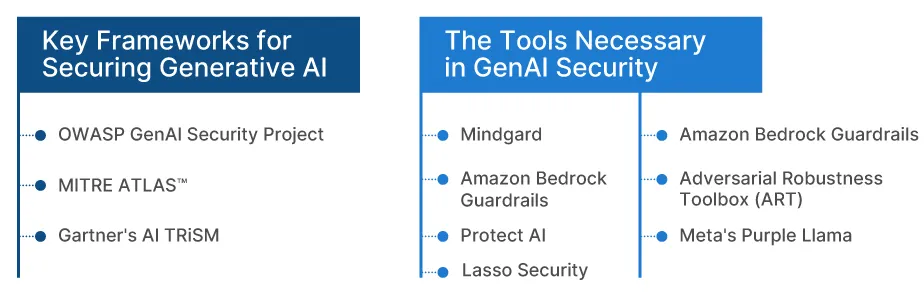

Key Frameworks for Securing Generative AI

It is important to have a solid base when developing GenAI systems. The appropriate framework will not only assist you in handling risks and keeping your system secure, but it will also enable your models to work in a responsible manner.

1. Tx-AEGIS

Our Tx-AEGIS framework is a holistic model for AI security and governance. The model is organized into practical pillars like RAG and prompt guardrails, model governance and privacy, supply-chain and platform hardening, monitoring/IR, red-team/TEVV, and vector-store controls for delivering a hardened stack. Tx-AEGIS is built to make AI adoption secure, compliant, and trustworthy to strengthen business resilience.

2. OWASP GenAI Security Project

This initiative provides an all-inclusive package of services, such as the Threat Defense COMPASS dashboard that assists organizations in evaluating and responding to threats, vulnerabilities, and possible mitigation strategies. It provides a comprehensive way of dealing with security threats in GenAI systems.

3. MITRE ATLAStm

MITRE offers a formalized mechanism for detecting and handling AI-specific security risks. They provide the model of the whole AI lifecycle that provides important insights into how to address its vulnerabilities during the development and implementation of a system to guarantee its security at all levels.

4. Gartner’s AI TRiSM

This model highlights Trust, Risk, and Security Management (TRiSM), and it is concerned with ensuring that AI is explainable, negotiating its performance, and making it remain secure. AI systems that remain clear, responsible, and consistent with privacy and security policies are necessary.

The Tools Necessary in GenAI Security

Besides frameworks, there is a range of tools that would assist in securing AI models and data. These tools will keep your AI applications protected, efficient, and moldable, not only by fending off attacks but also by tracking systems around the clock.

1. Mindgard

Mindgard provides both offensive and defensive features, and red teaming and real-time monitoring are automated. It was created to meet the special security issues that GenAI systems have and enable teams to identify and prevent possible threats proactively.

2. Lasso Security

Lasso is a company that provides contextual data protection to identify and provide notifications on security threats in real-time. The fact that it focuses on AI systems will guarantee that sensitive data is safeguarded during development, deployment, and further use.

3. Protect AI

Protect AI has AI Security Posture Management (AI-SPM) to assist in maintaining a clear security picture. It is also home to the LLM Guard toolkit, which assists in securing large language models from adversarial threats and attacks.

4. Wiz

Wiz is a single cloud security platform that provides AI-SPM functions to secure your AI models, as well as your pipelines and data in the cloud. It assists in securing your entire AI infrastructure, including both data storage and the deployment of models.

5. Adversarial Robustness Toolbox (ART)

ART is a Python library designed by the LF AI & Data Foundation to enable developers and researchers to evaluate and test AI models in adversarial attacks and assert their robustness in these situations. It is a very important device in maintaining the resiliency of your models against mounting threats of more advanced threats.

6. Meta’s Purple Llama

Purple Llama by Meta is an open-source project that aims too make generative AI models safer and more accountable. It offers guidelines and resources to deploy Large Language Models (LLMs) securely and ethically, and to make them not only secure but also aligned with the responsibilities of AI principles.

7. Amazon Bedrock Guardrails

The Bedrock Guardrails service of Amazon enables businesses to enforce their own protection on Amazon Bedrock models. It assists in making sure that the applications of AI are aligned with the ethical codes and are deployed responsibly in your company.

Challenges and Solutions in Scaling Secure GenAI Applications

The idea of scaling Generative AI systems has both promising opportunities and a lot of challenges. The bigger and more sophisticated these applications get, the more challenging it becomes to ensure that they are not compromised, discriminatory, or unaccountable.

The three best challenges and solutions to them include:

1. Managing Increased Data Privacy Risks

The larger the scale of GenAI systems, the more likely they are to work with bigger datasets, including more personal and sensitive information. It is accompanied by the increased threat of privacy intrusion, particularly with the tightening of data protection legislation.

Solution:

In order to ensure the security of data, companies can implement technologies such as Homomorphic Encryption and Differential Privacy that enable AI systems to analyze data without revealing sensitive data. Also, it is essential to anonymize the data where you can and make sure that you adhere to data privacy policies as you grow.

2. Maintaining Fairness Across Larger Datasets

The process of scaling up AI systems implies dealing with larger datasets, and it may highlight the biases that were not so evident when the system was smaller. This may result in unjust or biased results unless there is an appropriate check.

Solution:

It is also important to audit your models regularly with regard to fairness, particularly when they are scaled. Useful software, such as AI Fairness 360, can be used to identify possible biases in your models at the very beginning and fix them. By incorporating assessments of fairness into your development process, you can make your AI a fair organization to all.

3. Keeping Security Tight as the System Grows

The larger your AI system, the larger its vulnerability to security risks is. The bigger the model, the bigger the amount of information, the greater the number of vulnerabilities, such as adversarial attacks and data breaches. The security at scale is something that should be addressed consistently.

Solution:

Wiz, Mindgard, and Protect AI can be used to provide strong security to the growing AI systems through their tools. These tools mean that you will have constant surveillance, and before a threat can occur, your infrastructure will not be compromised, even as your system expands.

Future Trends in GenAI Security and Responsibility

Generative AI is an emerging field, and the security and ethical issues that relate to it are changing fast. The ability to keep up with emerging trends will enable organizations to predict the risks and respond to new changes in AI technology. The emerging trends to be considered are:

- Cybersecurity AI: AI is improving cybersecurity by incorporating such functionalities as detecting threats, real-time vulnerability analysis, and proactive prevention of cyberattacks.

- AI Regulation and Standards: As regulations like California AI Regulations, Colorado AI Act, EU AI Act comes in place, companies should embrace best practices to ensure that GenAI abides by fair, transparent, and ethical standards.

- Artificial Intelligence-Driven Ethics: Explainable AI (XAI) is a recent development that allows systems to explain their decisions, which makes them responsible and secure.

- AI Transparency and Accountability Tools: Novel applications are appearing, that intend to enhance AI decision-making transparency that assists companies in adhering to ethical standards and enhancing accountability.

Harness the Power of Secure GenAI Applications

At TxMinds, we have expertise in providing AI-based solutions that will make businesses transform faster. Our Generative AI development services assist companies in automating their workflows, increasing productivity, and modernizing their old systems. More than that, our contemporary model incorporates technologies like GPT-4, Llama, and PaLM-2 to guarantee scalable and secure AI applications designed to meet the needs of a particular industry.

Discover more