Recommended Blogs

Prevent Bad Data Early: A Modern Data Validation Process for Analytics and ML

Table of Content

- Why Early Data Validation is Crucial for Business Success

- The True Meaning of Modern Data Validation for Enterprises

- The Ultimate Framework to Prevent Bad Data Early in the Process

- How Organizational and Governance Excellence Drives Data Trust

- How TxMinds Helps You Prevent Bad Data Early with Scalable Solutions

Imagine making a high-stakes business decision based on flawed data. The result? Lost revenue, missed opportunities, and a significant hit to your business reputation.

According to a report, over a quarter of organizations estimate they lose more than USD 5 million annually due to poor data quality. Whereas, 7% report losses of USD 25 million or more because of data issues. For C-level executives, this is a harsh reality that directly impacts the bottom line.

But here is the perfect solution. Early data validation can prevent these costly mistakes. By addressing data issues at the source, companies ensure the integrity of their analytics and machine learning models. It further protects revenue, reputation, and decision-making.

In this blog, we will dive into why early data validation is crucial for large enterprises, how to implement it effectively, and how our Data Quality Assurance Services can help you safeguard your data, driving smarter and more reliable business outcomes.

Key Takeaways

- Over 25% of organizations lose more than USD 5 million annually due to poor data quality.

- Early data validation is more cost-effective than fixing issues later.

- Automated systems powered by AI ensure real-time, accurate data validation.

- Strong data ownership and feedback loops help maintain data reliability and accuracy.

Why Early Data Validation is Crucial for Business Success

Early data validation is crucial to running an efficient and data-driven business. When implemented correctly, it ensures that data your business decisions rely on is clean, accurate, and ready to deliver valuable insights.

Key Reasons Why Early Data Validation is Critical

- Cost Savings & Efficiency: Catching issues early is far more cost-effective than dealing with them later. By addressing data problems at the source, businesses can avoid expensive mistakes and delays that might disrupt operations or slow growth.

- Better Decision-Making: When the data is validated, you can trust it. This empowers your team to make faster, more confident decisions, based on reliable facts rather than assumptions.

- Streamlined Operations: With clean data flowing into your systems, operations run more smoothly. There’s less time spent fixing problems, and more time spent driving growth.

- Minimized Compliance Risks: Early validation ensures that your data complies with regulatory standards, reducing the risk of costly fines and safeguarding your reputation in the marketplace.

- Stronger Customer Relationships: Accurate, reliable data leads to smoother interactions with customers. Fewer errors in orders, billing, and delivery means stronger trust and loyalty from your customers.

- Optimized Data Processes: Embedding validation directly into your ETL processes ensures that only the best-quality data enters your systems, laying a strong foundation for every subsequent step in the pipeline.

The True Meaning of Modern Data Validation for Enterprises

Modern data validation has evolved beyond a simple technical task. It is now a strategic process that ensures your data is accurate, reliable, and aligned with business goals. By embedding validation early and continuously throughout the process, businesses can ensure data integrity, operational efficiency, and compliance.

Key Components of Modern Data Validation

- Proactive Approach: Modern validation starts as soon as data is entered into the system. This early intervention catches errors before they spread, ensuring that problems are addressed before they can impact decision-making or downstream processes. Early validation is crucial to preventing small issues from becoming bigger problems.

- AI and Automation: Manual checks can’t keep up with the speed and volume of today’s data. Automated systems, powered by machine learning, can detect anomalies, analyze patterns, and apply validation rules in real time. This approach speeds up data processing while improving accuracy and saving valuable time and resources.

- Complete Data Coverage: Modern validation doesn’t just check a small sample of data but ensures 100% of incoming data is validated. This thorough approach guarantees that no error is overlooked and that the data you’re working with is always reliable and accurate.

- Contextual Data Understanding: AI-powered validation goes beyond simple format checks. It understands the meaning behind the data and can flag inconsistencies or values that don’t make sense. For example, AI can spot data points that fall outside expected ranges, preventing outliers that traditional validation methods might miss.

Critical Types of Validation Checks

- Format and Pattern Matching: Ensures that data follows a specific structure, such as a valid email address or phone number, ensuring consistency across your systems.

- Range and Constraints: Validates that numerical data falls within set parameters, like pricing or quantity limits, preventing unrealistic or faulty data from entering the system.

- Uniqueness and Cardinality: Guarantees that each data point like customer IDs or transaction numbers is unique, preventing duplicates that could cause confusion.

- Referential Integrity: Validates relationships between different data sets (e.g., matching customer details to their order information) to ensure consistency and correctness.

- Consistency Across Systems: Ensures data is consistent across various platforms. For example, customer data in your CRM system should match the data in your billing system.

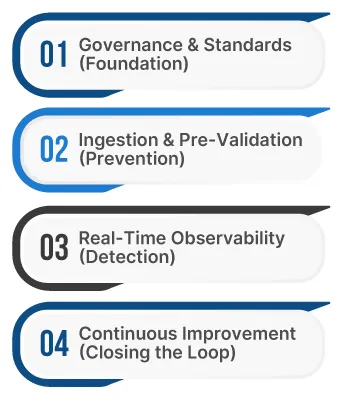

The Ultimate Framework to Prevent Bad Data Early in the Process

To ensure high-quality data from the outset, it’s essential to build a data validation framework that addresses issues before they cause damage. This is often referred to as the “Shift-Left” approach, which focuses on addressing data quality at the point of ingestion. By catching problems early, businesses save time, reduce costs, and make sure data is reliable and ready for critical decisions.

1. Governance & Standards (Foundation)

- Establish Clear Data Contracts: Set standards for data quality by defining agreements with both internal teams and third-party data providers. This ensures that data entering the system is aligned with business goals and operational requirements.

- Define Critical Data Elements: Identify the most important data points, such as customer information or financial data, and ensure these elements are validated as they enter the system. This helps avoid major disruptions down the line.

- Assign Data Stewards: Assign individuals responsible for overseeing the accuracy and integrity of the data. Data stewards are accountable for enforcing validation processes and ensuring quality standards are consistently met.

2. Ingestion & Pre-Validation (Prevention)

- Schema Enforcement: Ensure that only data that fits predefined structures is accepted. By validating data at the point of ingestion, you can catch errors before they enter your systems and cause downstream issues.

- Apply Data Validation Rules: Set up rules that validate incoming data to ensure it adheres to expected formats and ranges. This step prevents incorrect data, such as invalid dates or out-of-range numbers, from entering the system.

- Use Quarantine Queues: For data that fails validation, place it in a quarantine area where it can be reviewed and corrected before it enters the main database. This ensures no incorrect data makes its way into production.

3. Real-Time Observability (Detection)

- Automated Anomaly Detection: Use machine learning models to detect unusual patterns, like sudden spikes in data or unexpected changes, as soon as they occur. Early detection prevents issues from snowballing into larger problems.

- Monitor Data Drift: Track how data distributions change over time. If data starts to shift away from the expected patterns, it may indicate a deeper issue with the data source that needs to be addressed immediately.

- Track Data Lineage: Visualize the flow of data across systems. Understanding where data originates and how it travels helps pinpoint where errors occur, making it easier to fix issues at the source.

4. Continuous Improvement (Closing the Loop)

- Feedback Loops: Build systems for users to report issues with data. These real-time reports allow teams to act quickly and ensure that data quality issues are identified and addressed before they affect operations.

- Post-Mortem Analysis: When problems occur, analyze the root cause and put in place corrective measures to prevent them from happening again. This analysis is essential for continuous improvement.

- Data Quality Scorecards: Use dashboards that track key data quality metrics, such as accuracy and consistency, to assess the health of your data pipeline. These scorecards help highlight areas that need improvement.

Key Tools & Technologies

- Data Observability: Tools like Monte Carlo, Bigeye, and Acceldata help monitor data quality in real time, ensuring that data remains accurate and reliable as it moves through your pipeline.

- Data Validation: Platforms like Great Expectations, Deequ, and Apache Spark enable automated data validation, making it easier to check that data meets quality standards before it’s processed.

- Streaming & Ingestion Management: Solutions like Confluent and DooQ ensure that data streams are validated before they reach your systems, keeping the data pipeline clean and reliable.

- Data Governance: Tools like Alation, Collibra, and Data360 help enforce data governance policies and ensure compliance, making it easier to maintain high-quality data across the organization.

How Organizational and Governance Excellence Drives Data Trust

Strong governance and clear ownership are key to ensuring data remains reliable and trustworthy throughout its lifecycle. By setting clear rules for data management and ensuring consistent accountability, enterprises can build a foundation of trust in their data.

- Clear Ownership and Accountability: Assigning specific people or teams to be responsible for different types of data ensures accountability, with no ambiguity about who is responsible for keeping data accurate and reliable.

- Comprehensive Data Governance Policies: Establishing policies around who can access data, how it should be used, and how it must be protected ensures that data is handled ethically and consistently across the organization.

- Shared Metrics for Success: Tracking data quality through KPIs and dashboards provides teams with visibility into data health, helping them monitor and improve the data throughout its lifecycle.

- Continuous Feedback Loops: Regular feedback from stakeholders ensures that data issues are spotted early and fixed quickly, keeping data quality in check over time.

- Building a Data-Driven Culture: When everyone in the organization understands the importance of high-quality data, it becomes easier to maintain consistency and reliability in the data being used for business decisions.

How TxMinds Helps You Prevent Bad Data Early with Scalable Solutions

Here at TxMinds, our wide array of solutions guarantees that your data is accurate and reliable at all stages of its lifecycle. We implement an automated system for validating data during ingestion and proactively ensure that all data being used for analysis and ML modeling is clean and of the highest quality.

As your company expands, we at TxMinds grow right alongside you, ensuring that your data is of high quality and scalable.

FAQs

-

Poor data quality can lead to significant financial losses, with over 25% of organizations losing more than USD 5 million annually due to data issues.

-

Catching data issues early through validation prevents costly mistakes, delays, and operational disruptions, offering a more cost-effective solution.

-

AI-powered automation ensures real-time data validation, improving accuracy and efficiency in detecting anomalies and applying validation rules.

-

Establishing clear ownership, data governance policies, and regular feedback loops ensures data reliability, accuracy, and compliance across the organization.

Discover more