Top Data Trends 2026: What Decision Makers Need to Prioritize Now

Table of Content

Money is flowing into data engineering for a reason. The global data engineering services market is projected to reach $213 billion by 2031.

That kind of growth does not happen because companies want engaging dashboards. It happens because the basics are now business-critical. If the data platform is slow, fragile, or expensive, everything built on top of it suffers, including AI, customer experience, and decision-making.

In 2026, leaders are looking for platforms that stay reliable during peak demand, keep data governed without slowing teams down, and scale without turning cloud spend into a surprise. The winners will not be the teams that collect the most data. They will be the ones who can deliver trusted data quickly and consistently across the business.

This blog breaks down the top data engineering trends to watch in 2026 and what they mean for decision makers planning the next wave of growth.

Key Takeaways

- The data engineering services market is projected to hit $213B by 2031, because reliable, scalable data foundations are now business critical.

- In 2026, production AI demands fresh, accurate, always-available data with governance (contracts, observability, policy-as-code) built into pipelines.

- Data orgs are shifting to “platform as a product” with self-service, shared components, and clear reliability targets so teams can move faster.

- Architectures are moving toward real-time, open lakehouse formats, and zero-ETL, with FinOps discipline to prevent cloud spend surprises.

Why 2026 is a Defining Year for Data Engineering

Several big shifts are happening at the same time.

First, most companies are moving past small AI experiments and into day-to-day AI delivery. Once models are in production, the basics have to work every time. Data needs to be accurate, fresh, and available when it is needed. Real-time pipelines are becoming the norm because they support live inference, retrieval-based systems like RAG, and models that are tuned for specific business domains.

Second, the way data teams build and deliver capabilities is changing. Platform engineering is pushing data platforms to act more like products. Instead of stitching together one-off pipelines for each request, teams are building shared components, clear interfaces, and self-service tools. They are also putting commitments in place, like defined reliability targets and predictable performance, so internal users can plan around the platform.

Third, governance and compliance are moving to the front of the line. Privacy rules are expanding across regions, and where data lives is now a board-level topic for many organizations. That means guardrails cannot be bolted on later. Access controls, auditability, and policy checks need to be built into the workflows engineers use every day.

Taken together, 2026 is shaping up to be the year many organizations finally replace patchwork data setups with platforms designed for AI from the start, run with product discipline, and built with governance baked in.

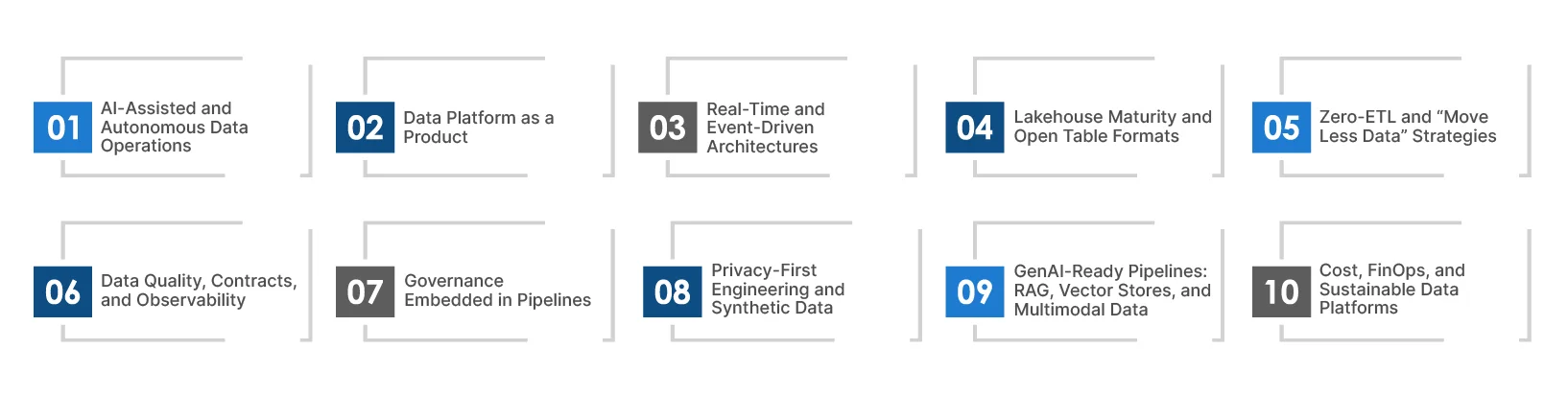

Top Data Engineering Trends to Watch in 2026

The data engineering trends below represent structural changes in how modern data platforms are designed, governed, and scaled.

For decision-makers, understanding these trends is less about technology preference and more about building a durable competitive advantage.

1. AI-Assisted and Autonomous Data Operations

AI is starting to show up inside the day-to-day work of data engineering. Copilots can draft transformation code, tune SQL, and keep pipeline docs up to date. They can even flag when a schema change is likely and suggest a safer path.

The bigger shift is in operations. Modern observability tools use ML to spot unusual patterns early, predict when a pipeline is about to break, and sometimes fix the issue automatically. These self-healing pipelines cut down on firefighting, keep data fresher, and improve uptime.

For enterprises running AI at scale, this means fewer late-night incidents, faster recovery when something does go wrong, and more confidence that business reports and model outputs are not being thrown off by silent data issues.

2. Data Platform as a Product

Many organizations are moving to centralized data platform teams that run data infrastructure like a product. They focus on internal user experience, a clear roadmap, solid versioning, and adoption metrics.

Instead of every domain rebuilding the same pipelines, the platform provides shared ingestion patterns, standard transformation layers, and consistent access controls. Domain teams still own their data products and quality through clear data contracts. This split helps the data stack scale without slowing teams down.

3. Real-Time and Event-Driven Architectures

Real-time is no longer reserved for trading systems and fraud detection. A lot of everyday business systems now need it too, like product recommendations, supply chain planning, equipment monitoring, and customer engagement.

Most companies end up running a mix of streaming and batch. They use tools like event streams and CDC alongside scheduled jobs, and the hard part is keeping everything aligned. You still need consistent definitions, end-to-end lineage, and sane schema management so the stream and the batch world do not drift apart.

Teams that get this right see results sooner. They can react faster, learn quicker, and stay ahead of competitors that are still waiting on yesterday’s data.

4. Lakehouse Maturity and Open Table Formats

The lakehouse approach has stopped being experimental for many teams. Instead of treating the data lake as “cheap storage” and the warehouse as “where the real work happens,” organizations are standardizing on open table formats like Apache Iceberg, Delta Lake, and Apache Hudi. These formats bring the basics you expect in production: reliable transactions, safer schema changes, and better performance as data grows.

Another reason they are popular is flexibility. Open formats make it easier to switch tools, run across clouds, and avoid getting boxed into one vendor, which matters more now that portability and data residency are front and center.

With one storage layer handling both structured and unstructured data, teams can simplify the stack, tighten governance, and keep costs under control.

5. Zero-ETL and “Move Less Data” Strategies

Moving data around is expensive, and it creates problems. Every copy adds delay, drives up storage and egress costs, and increases the chance of leaks or mismatched versions. That is why more teams are looking at zero ETL setups, data virtualization, and federated query engines to cut down on replication.

Instead of pushing data into yet another warehouse or lake, they focus on connecting to it where it already lives, sharing it securely, and spinning up compute only when needed. The payoff is fewer redundant datasets, a cleaner security story, and answers delivered faster because you are not waiting on another pipeline to finish.

6. Data Quality, Contracts, and Observability

As data powers revenue-generating systems and AI models, quality becomes a business-critical metric. Observability frameworks now monitor completeness, accuracy, timeliness, and distribution drift across datasets.

Data contracts formalize expectations between producers and consumers, preventing downstream failures caused by schema changes or undocumented transformations. End-to-end lineage mapping supports auditability and impact analysis. Together, these capabilities elevate data reliability to the same level of rigor as application reliability.

7. Governance Embedded in Pipelines

Governance can no longer be an afterthought layered on top of analytics systems. Policy-as-code frameworks allow access controls, retention rules, and compliance requirements to be codified directly within CI/CD pipelines.

Automated enforcement ensures that data sharing complies with internal policies and regulatory mandates. Continuous compliance scanning, audit trails, and metadata-driven classification systems create a governance-by-design approach that reduces regulatory risk.

8. Privacy-First Engineering and Synthetic Data

With regulatory landscapes expanding, privacy-enhancing technologies (PETs) such as differential privacy, secure multi-party computation, and tokenization are becoming engineering considerations rather than legal afterthoughts.

Synthetic data generation is also gaining adoption, particularly for testing AI models or sharing datasets across organizational boundaries. By decoupling analytics value from personally identifiable information (PII), enterprises can innovate while preserving trust.

9. GenAI-Ready Pipelines: RAG, Vector Stores, and Multimodal Data

Generative AI initiatives are changing what data pipelines need to handle. Unstructured data, such as documents, transcripts, logs, and multimedia files, must be cleaned, broken into usable chunks, indexed properly, and enriched with the right metadata to support retrieval augmented generation systems.

Vector databases and feature stores play an important role in keeping training and inference environments consistent. Pipelines that can process text, images, telemetry, and audio are quickly becoming part of standard data platforms. Teams that focus on data freshness, strong evaluation processes, and accurate retrieval will see real business returns from their GenAI investments.

10. Cost, FinOps, and Sustainable Data Platforms

Cloud native architectures and advanced analytics workloads significantly increase compute consumption. Without disciplined FinOps practices, costs can escalate rapidly.

Leading organizations are implementing workload isolation, cost attribution models, and unit economics metrics such as cost per query, cost per pipeline run, or cost per model inference. Automated scaling, scheduling controls, and storage tiering help balance performance with fiscal responsibility. Sustainability considerations, including energy efficiency and carbon footprint visibility, are increasingly influencing architectural decisions as well.

How to Prioritize These Trends: A Practical 2026 Roadmap

You cannot chase every trend at once without spreading teams and budgets thin. Start with the basics, so AI and analytics have stable, trusted data to run on. Then upgrade how the platform is owned and delivered so it can scale. Once that is in place, expand into real-time systems and GenAI pipelines. Keep a close eye on costs throughout, so progress does not turn into overspend.

- Begin with reliability and governance by investing in observability, enforcing data contracts, and embedding policy controls directly into pipelines so AI systems can scale safely and predictably.

- Modernize the operating model by establishing a centralized data platform team, defining clear service-level objectives, and treating the platform as a product with measurable adoption and performance goals.

- Expand into real-time streaming and GenAI-ready architectures once the foundation is stable, ensuring that new capabilities are built on trusted and well-governed data.

- Implement FinOps discipline across workloads by tracking unit economics, optimizing compute consumption, and continuously aligning performance with cost efficiency.

How TxMinds Helps You Build a 2026 Ready Data Platform

At TxMinds, we help teams get their data engineering house in order so they can move faster without losing control. We modernize legacy warehouses into cloud data platforms, build data lakes that can handle structured and unstructured data, and set up real-time and batch pipelines that teams can trust in production.

We also support end-to-end ETL and data warehouse work with a focus on clean integration, consistent quality, and an architecture that scales with your business.

The goal is simple. We help you turn scattered data into a dependable foundation for analytics, reporting, and AI initiatives, while keeping performance and cloud costs in check.

FAQs

-

Because companies are moving from AI pilots to production, which makes reliable, governed, fresh data non-negotiable for day-to-day operations.

-

It means running the data platform like an internal product with self-service tools, shared components, clear interfaces, versioning, and reliability targets (SLOs).

-

They use a hybrid of streaming/event-driven systems and scheduled jobs, while enforcing consistent schemas, lineage, and definitions so streams and batches don’t drift.

-

By applying FinOps practices like workload isolation, cost attribution, and unit economics (e.g., cost per query, pipeline run, or model inference) plus autoscaling and storage tiering.

Discover more